Scaling Semantic Search for Agentic AI: Supabase, Postgres, and Next.js in 2026

Introduction

Building truly intelligent Agentic AI systems requires more than just large language models; it demands efficient, semantically aware data retrieval. Traditional keyword searches are brittle, failing to capture the nuanced intent behind complex queries. In 2026, relying on LIKE operators for context is a serious architectural oversight. We need robust, scalable methods to inject external knowledge, and vector embeddings paired with a modern backend stack are the answer.

The Limitations of Lexical Search

Consider an Agentic AI tasked with answering questions about your platform's documentation. A user asks: "How do I integrate a webhook?" A keyword search might return articles containing "webhook" but miss a guide on "event listeners" if the term "webhook" isn't explicitly used, despite semantic relevance. This gap reduces the AI's accuracy and utility. Semantic understanding is crucial for effective Retrieval Augmented Generation (RAG) patterns.

Vector Embeddings: The Core of Semantic Understanding

Vector embeddings transform text, images, or other data into high-dimensional numerical arrays. These vectors capture the semantic meaning and contextual relationships of the original data. Documents with similar meanings will have vectors positioned closer together in this multi-dimensional space. This allows for proximity search, identifying not just keyword matches, but conceptual similarities.

Supabase and pgvector for Full-stack Development

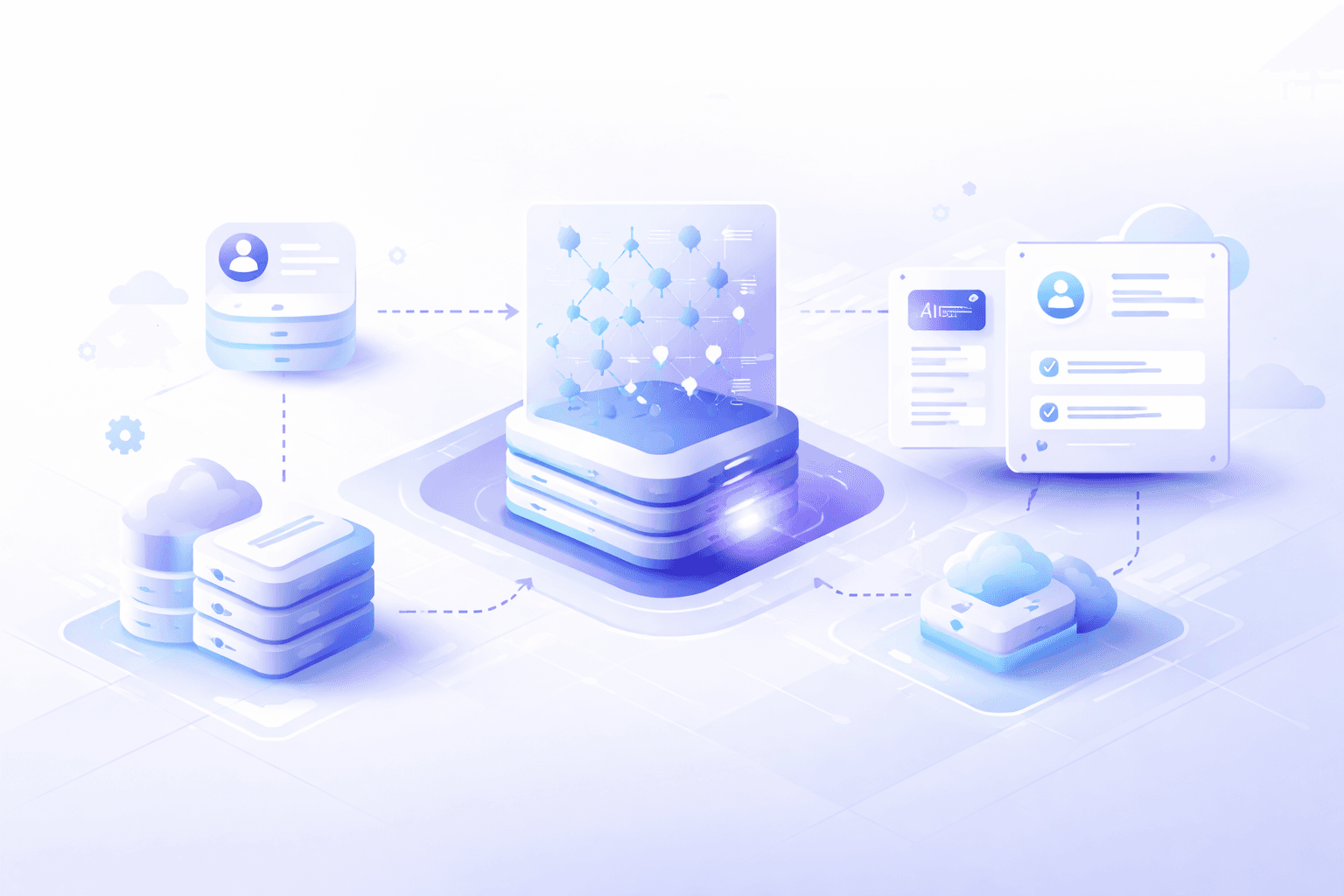

Supabase, built on Postgres, offers a powerful, production-ready solution for managing these embeddings via the pgvector extension. This integrates vector search directly into your primary database, simplifying your stack. For Next.js full-stack development, this means you can manage application data and vector embeddings within the same database, streamlining queries and reducing latency.

Implementing RAG with Supabase and Next.js

The RAG architecture involves two primary steps: retrieval and generation. For retrieval, your Agentic AI system queries a knowledge base to find relevant documents or data snippets. These retrieved pieces of information are then fed to a large language model (LLM) as context, allowing the LLM to generate a more informed and accurate response.

Here's a simplified example of how you might query for semantically similar documents in a Next.js API route using Supabase:

import { createClient } from '@supabase/supabase-js';

// Assume these are loaded from environment variables

const supabaseUrl = process.env.NEXT_PUBLIC_SUPABASE_URL!;

const supabaseAnonKey = process.env.NEXT_PUBLIC_SUPABASE_ANON_KEY!;

const supabase = createClient(supabaseUrl, supabaseAnonKey);

interface Document {

id: string;

content: string;

embedding: number[];

}

export async function getSimilarDocuments(queryEmbedding: number[], limit = 5): Promise<Document[]> {

if (!queryEmbedding || queryEmbedding.length === 0) {

throw new Error("Query embedding is required.");

}

const { data, error } = await supabase.rpc('match_documents', {

query_embedding: queryEmbedding, // This is your user query's embedding

match_threshold: 0.78, // Adjust as needed for relevance

match_count: limit // Number of documents to return

});

if (error) {

console.error('Error fetching similar documents:', error);

throw error;

}

return data as Document[];

}

// Example RPC function 'match_documents' in Supabase:

// CREATE OR REPLACE FUNCTION match_documents(

// query_embedding vector(1536),

// match_threshold float,

// match_count int

// )

// RETURNS TABLE (

// id uuid,

// content text,

// embedding vector(1536),

// similarity float

// )

// LANGUAGE plpgsql AS $$

// BEGIN

// RETURN QUERY

// SELECT

// documents.id,

// documents.content,

// documents.embedding,

// (documents.embedding <#> query_embedding) * -1 AS similarity

// FROM documents

// WHERE (documents.embedding <#> query_embedding) * -1 > match_threshold

// ORDER BY documents.embedding <#> query_embedding

// LIMIT match_count;

// END;

// $$;

This match_documents RPC function, leveraging pgvector's <#> (negated dot product for cosine similarity, where smaller values mean closer vectors, hence *-1 for similarity score) operator, efficiently retrieves the most relevant content based on the semantic similarity of their embeddings. The queryEmbedding would typically be generated by an embedding model (e.g., OpenAI, Cohere, or an open-source model) on the user's query.

Scaling Your Semantic Search

For larger datasets, efficient indexing is vital. pgvector supports various index types like IVFFlat and HNSW. HNSW (Hierarchical Navigable Small Worlds) generally offers a better balance between search speed and accuracy for high-dimensional vectors. Properly configuring these indexes within your Supabase Postgres instance ensures your Agentic AI remains responsive even as your knowledge base grows.

Conclusion

Empowering Agentic AI with contextual awareness means moving beyond naive keyword matching. Vector embeddings, seamlessly integrated into your modern backend with Supabase and Postgres, provide the semantic depth required. This foundation, combined with Next.js for your frontend, delivers a powerful, scalable architecture for advanced full-stack development. Ready to build your next intelligent application? Learn more on our [/blog](Blog Hub).